The Horrific Deepfake Scandal Of South Korea Should Scare Everyone

It's not just deepfakes. It's personal information, stalking, and child victims. Trigger warning: CSA, CP, assault, abuse

My article about the misogyny in South Korea has been going viral. For the most part, people have been supportive of me uncovering this and actually talking to my Korean friend who currently lives there with his romantic partners.

A couple of people have actually encouraged me to go deeper in my research — and a large part of me wishes I didn’t. This was an article that really triggered me, but if I don’t talk about it, international pressure to end this shit won’t happen.

South Korea’s deepfake problem has become a national crisis that is destroying the very fabric of their society. Readers, if you have a weak stomach, please skip this article.

Deepfakes have become a growing internet sex crime in recent years.

For those not in the know, deepfakes are AI-made falsified videos and photos of a person. In most cases, the deepfakes are used to blackmail, intimidate, and alienate victims. They are generally X-rated.

In America, deepfakes are common among public figures like porn stars. I’ve also seen at least one deepfake of a runway model making its rounds on the net.

Deepfakes are a form of sexual violence. They intimidate, violate, and harm the victim. What’s alarming about this is that you only need a couple of photos to create a deepfake video.

Korea has been seeing a massive increase in deepfakes trades that are highly targeted.

In Korea, the chat app known as Telegram is the go-to app for men and boys who want to get deepfakes. There, incels and predators who want to get deepfakes go on a chatroom.

The chatrooms are not your typical sexy chat time. No, they are chatrooms that are alarmingly niche, detailed, and stalker-like. The chatrooms involve groups of people talking about the daily habits of their victims, even going so far as to post school ID numbers and names of family members.

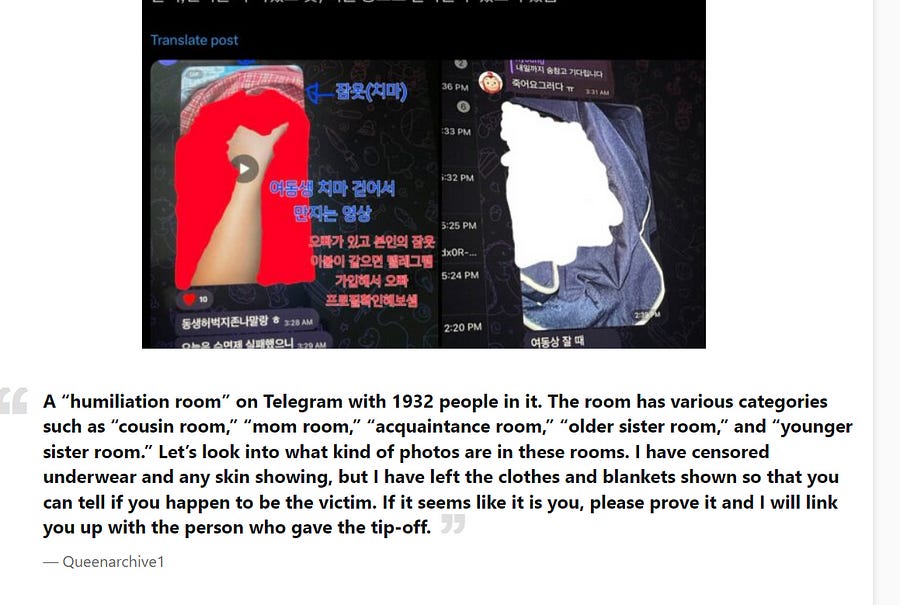

Among Korean locals, these chatrooms are known as “humiliation rooms.” According to my research, these chats:

Are divided by women’s names, local schools, jobs, and more. The chat operators demand requesters tell them the names of the victims, their school system/jobs, names of relatives, and more. In other words, it’s not just deepfaking. It’s doxxing and stalking.

Involve major money transactions. Some of these chat rooms are funneling as much as $200,000 a month or more.

Are absolutely massive. A chat that was focused on ONLY ONE SCHOOL had over 2,000 members. Rotten Mango, a YouTuber who covered this, explained that membership is so high, some estimates suggest that there might be hundreds of thousands of men and boys in these chats.

Are most commonly frequented by teenaged boys. Some of the perpetrators are even in middle school.

Are also separated into separate chatrooms based on the category. One chat that was uncovered involved deepfakes of users’ younger sisters in one room. Another was a humiliation room entirely devoted to getting deepfakes of users’ mothers — on request of the users.

Use clothed photos found on Instagram, yearbooks, family photos and more. This is precisely why women are pulling their photographs off social media entirely. Sadly, that alone is not enough to prevent this from happening.

The women and girls who are being targeted know nothing about the people who are hurting them. Yet, their attackers know everything. Attackers will often harass them via social media, phone, and more.

Attackers will often use these images to blackmail or intimidate the girls into giving them real images.

This is a form of internet crime known as “sextortion,” a growing issue stateside. The more typical way this happens is that a faceless person will ask for nudes or dick pics. Then, they have ammo and threaten to expose you if you don’t do what they say.

What is happening in Korea is a step beyond the typical methods used in America. It’s meant to make you feel unsafe — primarily because you don’t even send nudes to have this happen.

The typical age of the victims is shocking.

I want to emphasize something. While many of the victims are adult women who are in college or fully adult, this is a major scandal because humiliation rooms are targeting schools.

To date, over 500 schools have been implicated in deepfake Telegram scandals. Many, if not most, of the victims are under the age of 16. Most of the perpetrators currently caught are either teens or young adult men.

Some of the victims are middle school age — roughly between 11 to 13 years old. Per at least one source I used in my research (Rotten Mango podcast), at least one toddler was also victimized.

I also want to point out that, while this is almost always a female victim, male victims have also been found in these chatrooms.

But who’s doing this?

That’s the most alarming part about all this: a large portion of perpetrators are teenage boys, young adults, as well as middle school boys. Deepfake victims have been harmed by their brothers, boyfriends, friends, as well as classmates.

Many of the teenagers do not seem to understand the damage they are doing to their girlfriends, friends, or even their sisters. Officials seem to be realizing (slowly) that this could lead to lasting damage to their society.

Sex offender counselor Lee Myung-hwa noted, “For teenagers, deepfakes have become part of their culture, they’re seen as a game or a prank.”

It’s anything but. It’s a traumatizing, terrorizing affront on the victims. To make matters worse, estimates suggest that there are between 60,000 and 230,000 perpetrators.

President Yoon Suk Yeol said, “Some may dismiss it as a mere prank, but it is clearly a criminal act that exploits technology under the shield of anonymity.”

Cries for help are falling on deaf ears at Telegram.

Telegram became famous for its role in hiding the assailants’ identities — to the point that the South Korean government begrudgingly went to the company to ask for help in investigating the rooms.

Telegram and its founder, Pavel Durov, refused to work with the government. Eventually, Durov was arrested in France for aiding in the distribution of child pornography.

That charge was directly linked to South Korea’s deepfake scandal, however it was actually a charge brought up by French police — not Korea’s own government.

Of course, Korea has, among growing outrage, paid lip service to the international community. But actually prosecuting people caught in the act is another story.

South Korean officials aren’t really practicing what they preach.

Police in South Korea have been dragging their feet when it comes to pursuing criminal charges for the perpetrators. Police often dissuade women from pursuing charges, saying it would “ruin both parties’ reputations.”

Parents of perpetrators are also helping in the coverup, with many mothers saying that their child “would never do that” despite ample evidence to the contrary. Some parents even admit their children did it. They just don’t want the ruined reputation.

This is, sadly, par for the course. South Korea has become famous for refusing to protect women or charge perpetrators for sex crimes.

A justified paranoia and fear is growing in Korean women.

The effects of the deepfake scandal are devastating — and they’re affecting women in ways many wouldn’t expect. Women throughout Korea are now taking their photos off social media entirely out of fear that they may be next. Sadly, that doesn’t make them immune to this harassment.

Many of the women and children targeted have photos surreptitiously taken of them while in public. They can’t prevent it from happening. Rather, they have to deal with life in a panopticon where they’re a moving target — never safe from their all-seeing invisible stalkers.

Seeing the scandal grow makes it abundantly clear that South Korea does not care about its women. Moreover, South Korean women are correct to avoid men in their lives. After all, it’s the only defense they have against the deepfakes the government refuses to address.

This, ladies, enbies and gents, is what a country that failed its women looks like.